One of the abstractions Elixir provides around processes is the GenServer module. A GenServer is a process like any other Elixir process and it can be used to keep state, execute code asynchronously and so on.

Recently, while using Elixir processes (specifically GenServer), I found myself facing an issue that often occurs in other programming languages: the dreaded memory leaks.

Use Case / Problem

Basically, I have an application that when started initializes multiple GenServers in parallel that makes multiple calls to externals API’s. The processes execute work every 5 minutes and have short-lived data.

Initially, I did not found problems but with the increase in the number of processes, I’ve checked that each process has unnecessarily data stored in the memory after the first run.

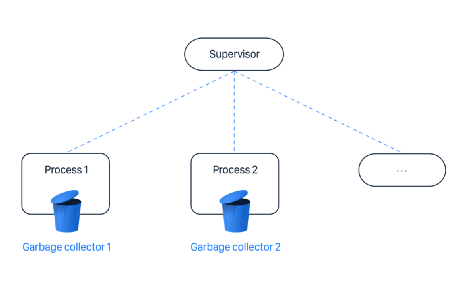

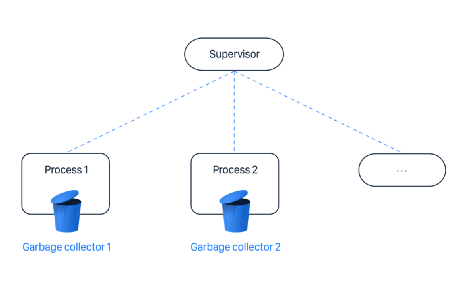

Elixir supervision tree and garbage collectors

Elixir supervision tree and garbage collectors

Solution 1 — use fullsweep_after on GenServer.start_link

After some investigation, I found some links that talk about memory usage in theGenServer. And I found this paragraph by Sasa Juric:

One option is to set the fullsweep_after flag of the problematic process to zero or a very small value. I think that GenServer.start_link(callback_module, spawn_opt: [fullsweep_after: desired_value]) should do the job.

And this information about BIF (Built-In Functions) in docs for the :erlang module:

{fullsweep_after, Number}

Useful only for performance tuning. Do not use this option unless you know that there is a problem with execution times or memory consumption, and ensure that the option improves matters.

The Erlang runtime system uses a generational garbage collection scheme, using an “old heap” for data that has survived at least one garbage collection. When there is no more room on the old heap, a fullsweep garbage collection is done.

Option fullsweep_after makes it possible to specify the maximum number of generational collections before forcing a fullsweep, even if there is room on the old heap. Setting the number to zero disables the general collection algorithm, that is, all live data is copied at every garbage collection.

A few cases when it can be useful to change fullsweep_after:

If binaries that are no longer used are to be thrown away as soon as possible. (Set Number to zero.)

A process that mostly have short-lived data is fullsweeped seldom or never, that is, the old heap contains mostly garbage. To ensure a fullsweep occasionally, set Number to a suitable value, such as 10 or 20.

In embedded systems with a limited amount of RAM and no virtual memory, you might want to preserve memory by setting Number to zero. (The value can be set globally, see erlang:system_flag/2.)

Solution 2 — use :hibernate on the Genserver

Investigating a little more, I found another paragraph in the docs for GenServer:

Returning {:reply, reply, new_state, :hibernate} is similar to {:reply, reply, new_state} except the process is hibernated and will continue the loop once a message is in its message queue.

If a message is already in the message queue this will be immediately. Hibernating a GenServer causes garbage collection and leaves a continuous heap that minimises the memory used by the process.

…

Once you know which processes are causing the problem, a simple fix could be to hibernate the process after every message.

This is done by including :hibernate in the result tuple of handle_* callbacks (e.g. {:noreply, next_state, :hibernate}). This will reduce the throughput of the process, but can do wonders for your memory usage.

After reading this information, I decided to experiment with different solutions and compare the results.

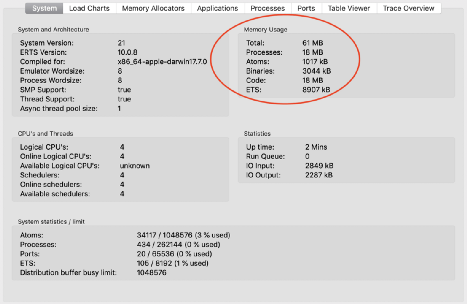

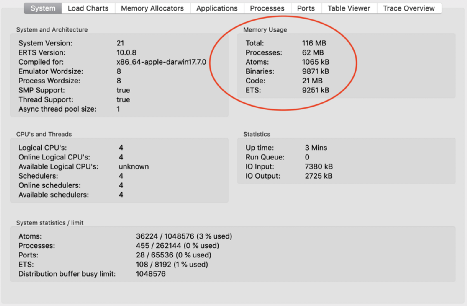

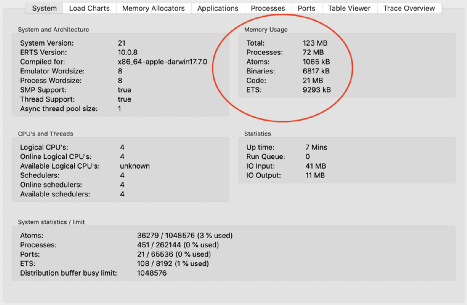

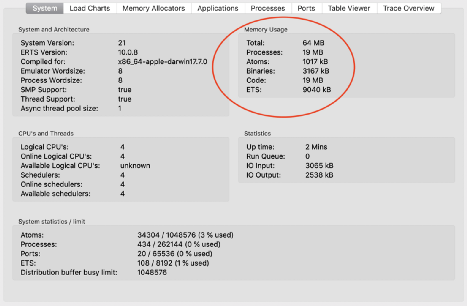

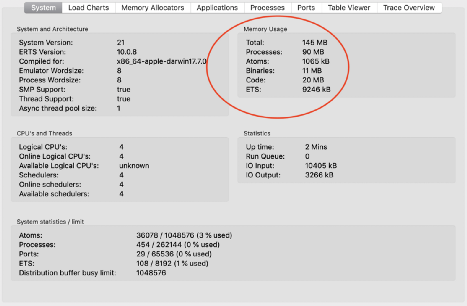

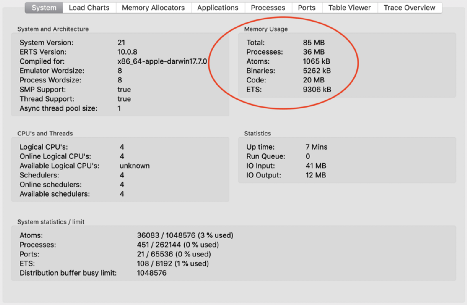

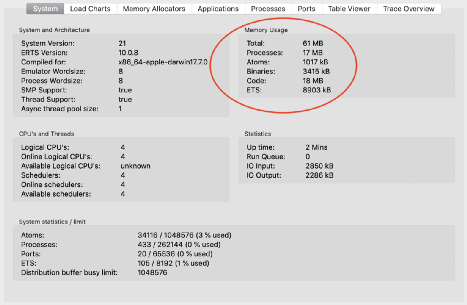

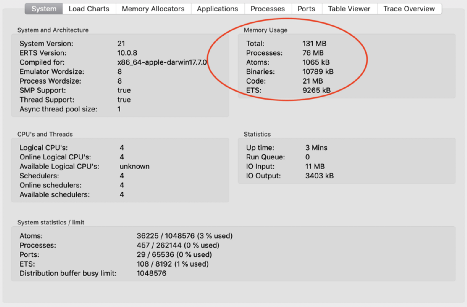

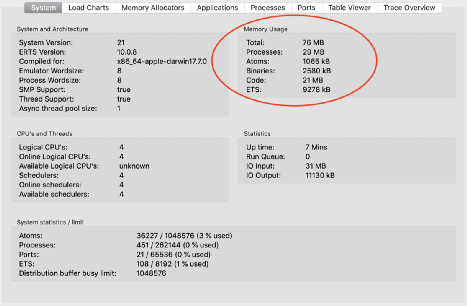

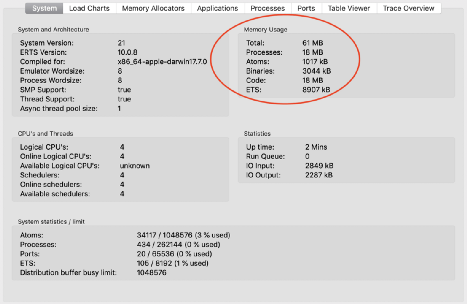

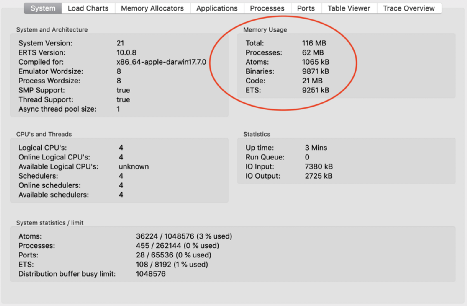

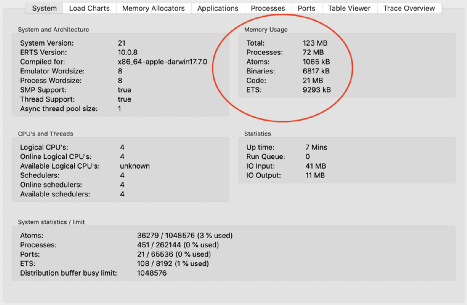

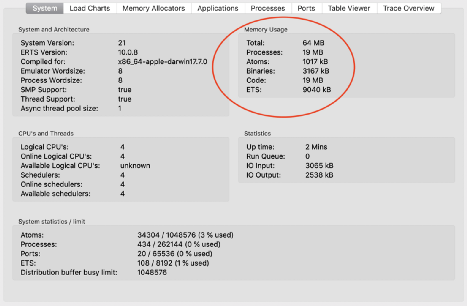

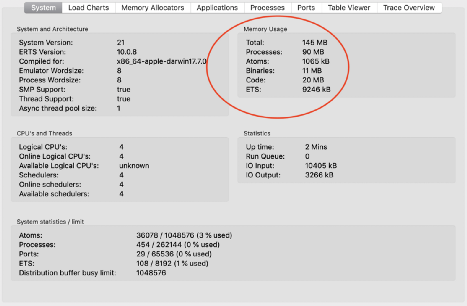

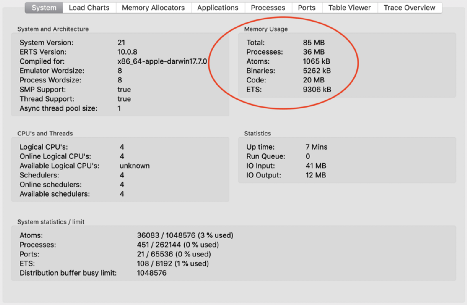

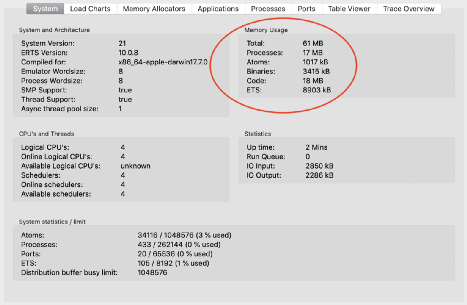

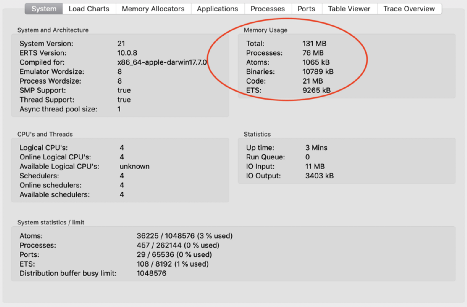

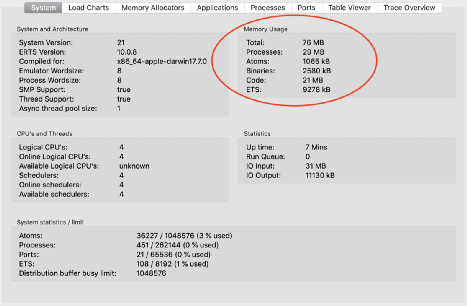

The screenshots have a red bubble around the total memory usage and the process memory usage, which are the values we are going to compare/focus between tests.

Test 1 - Test with the default fullsweep_after value (65535)

In the initial test, I changed nothing from my previous code in order to obtain the current status of memory consumption.

def start_link(state) do

GenServer.start_link(

__MODULE__,

state,

name: handle_string_to_atom("proj_#{state.project_id}")

)

end

After all the processes have started

genserver memory usage after start

genserver memory usage after start

After all the processes have started and 5 of them had started executing their work:

genserver memory usage mid execution

genserver memory usage mid execution

After all the processes have started and 5 of them had executed their work:

genserver memory usage execution finished

genserver memory usage execution finished

Test 2 - Test with fullsweep_after with a value of 0

In this test, I force the GenServer to execute the Garbage Collection after every cycle.

def start_link(state) do

GenServer.start_link(

__MODULE__,

state,

name: handle_string_to_atom("proj_#{state.project_id}"),

spawn_opt: [fullsweep_after: 0]

)

end

After all the processes have started:

genserver memory usage after start with fullsweep-after at 0

genserver memory usage after start with fullsweep-after at 0

After all the processes have started and 5 of them had started executing their work:

genserver memory usage after start with fullsweep-after at 0 mid execution

genserver memory usage after start with fullsweep-after at 0 mid execution

After all the processes have started and 5 of them had executed their work once:

genserver memory usage after start with fullsweep-after at 0 execution finished

genserver memory usage after start with fullsweep-after at 0 execution finished

Test 3 - Test with :hibernate process

In this test, I force the GenServer to hibernate after every execution.

def handle_*(event, state) do

...

{:noreply, state, :hibernate}

end

After all the processes have started:

genserver memory usage after start with :hibernate

genserver memory usage after start with :hibernate

After all the processes have started and 5 of them had started executing their work:

genserver memory usage after start with :hibernate mid execution

genserver memory usage after start with :hibernate mid execution

After all the processes have started and 5 of them had executed their work once:

genserver memory usage after start with :hibernate execution finished

genserver memory usage after start with :hibernate execution finished

Lesson learned

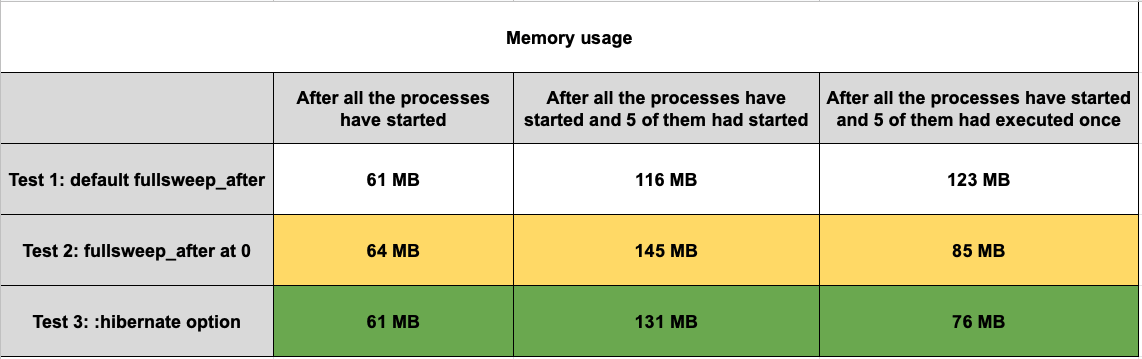

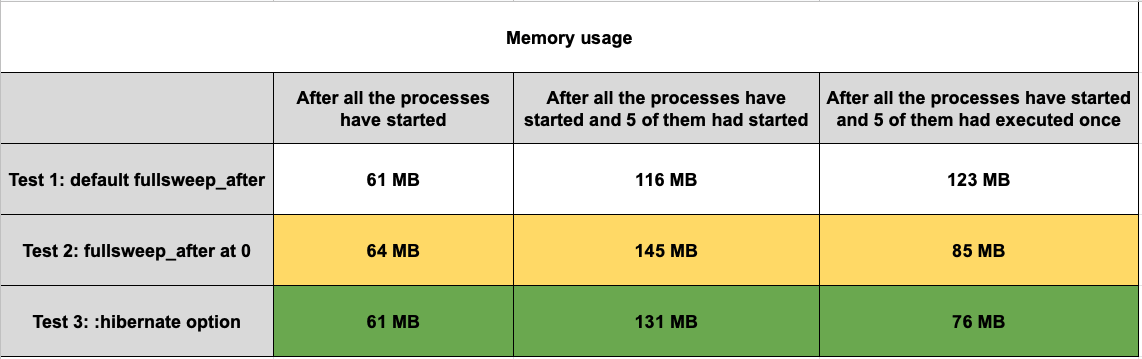

Comparison of results between tests

Comparison of results between tests

This is not a replacement for efficient code and that should be used thoughtfully.

Another important thing is when I force the GenServer to execute the Garbage Collection after every cycle, the CPU usage is increased drastically, so you need to be careful with these changes.

For my case, the best solution was to hibernate the process after the execution, but you should test and analyse what is the best solution for your problem.

Useful links